部署ceph集群(一)

一:环境要求

服务器系统:centos7.9_x86_64

ceph版本: luminous

二:环境规划

| 节点类型 | 主机IP | 主机名 | 系统盘 | 数据盘1 | 数据盘2 |

| admin节点 | 192.168.201.130 | ceph-admin | sda | ||

| mon节点 | 192.168.201.131 | ceph-mon1 | sda | ||

| mon节点 | 192.168.201.132 | ceph-mon2 | sda | ||

| mon节点 | 192.168.201.133 | ceph-mon3 | sda | ||

| mgr节点 | 192.168.201.134 | ceph-mgr1 | sda | ||

| mgr节点 | 192.168.201.135 | ceph-mgr2 | sda | ||

| store节点 | 192.168.201.136 | ceph-store1 | sda | sdb | sdc |

| store节点 | 192.168.201.137 | ceph-store2 | sda | sdb | sdc |

| store节点 | 192.168.201.138 | ceph-store3 | sda | sdb | sdc |

| client客户端 | 192.168.201.140 | ceph-client | sda | 可后期准备 |

三:环境准备

1、系统优化

yum install -y wget vim bash-completion lrzsz net-tools nfs-utils yum-utils rdate ntpdate

PS1="\[\e[1;32m\][\t \[\e[1;33m\]\u\[\e[35m\]@\h\[\e[1;31m\] \W\[\e[1;32m\]]\[\e[0m\]\\$"

echo 'PS1="\[\e[1;32m\][\t \[\e[1;33m\]\u\[\e[35m\]@\h\[\e[1;31m\] \W\[\e[1;32m\]]\[\e[0m\]\\$"' >>/etc/profile

echo 'export HISTTIMEFORMAT="%F %T `whoami` "' >>/etc/bashrc

echo -e "ClientAliveInterval 30 \nClientAliveCountMax 86400" >>/etc/ssh/sshd_config

#sed -i '/#Port 22/a Port 52113' /etc/ssh/sshd_config

#rdate -s time.nist.gov

ntpdate 61.160.213.184

clock -w

echo "0 */1 * * * /usr/sbin/ntpdate 61.160.213.184 &> /dev/null" >> /var/spool/cron/root

#echo "0 */1 * * * /usr/bin/rdate -s time.nist.gov &> /dev/null" >> /var/spool/cron/root

mkdir /etc/yum.repos.d/bak

\mv /etc/yum.repos.d/*.repo /etc/yum.repos.d/bak

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

sed -ri 's@(.*aliyuncs)@#\1@g' /etc/yum.repos.d/CentOS-Base.repo

yum clean all

yum makecache

#yum update -y --exclude=kernel* --exclude=centos-release* --skip-broken

echo 'LANG="en_US.UTF-8"' >/etc/locale.conf

. /etc/locale.conf

echo "net.ipv4.ip_forward = 1" >>/etc/sysctl.conf

sysctl -p

sed -i '/UseDNS/a UseDNS no' /etc/ssh/sshd_config

systemctl restart sshd

systemctl stop firewalld.service

systemctl disable firewalld.service

sed -ri 's#^(SELINUX=).*$#\1disabled#g' /etc/selinux/config

setenforce 0

systemctl stop NetworkManager

systemctl disable NetworkManager2、时间同步在系统优化里已经设置

3、所有节点修改主机名并相互解析

#对应设置

hostnamectl set-hostname ceph-admin

hostnamectl set-hostname ceph-mon1

hostnamectl set-hostname ceph-mon2

hostnamectl set-hostname ceph-mon3

hostnamectl set-hostname ceph-mgr1

hostnamectl set-hostname ceph-mgr2

hostnamectl set-hostname ceph-store1

hostnamectl set-hostname ceph-store2

hostnamectl set-hostname ceph-store3cat >>/etc/hosts<<EOF

192.168.201.130 ceph-admin

192.168.201.131 ceph-mon1

192.168.201.132 ceph-mon2

192.168.201.133 ceph-mon3

192.168.201.134 ceph-mgr1

192.168.201.135 ceph-mgr2

192.168.201.136 ceph-store1

192.168.201.137 ceph-store2

192.168.201.138 ceph-store3

EOF4、所有的节点创建普通用户cephu并设置密码,授权sudo权限

useradd cephu && echo qweqwe | passwd --stdin cephu

cat >>/etc/sudoers<<EOF

cephu ALL=(root) NOPASSWD:ALL

EOF5、admin节点普通用户cephu下创建密钥并分发到所有节点

su - cephu

#3步回车

ssh-keygen

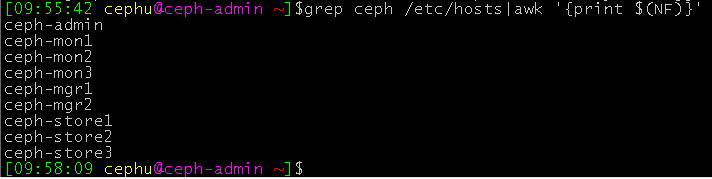

for i in `grep ceph /etc/hosts|awk '{print $(NF)}'`;do ssh-copy-id $i;done说明:获取集群里所有的主机名

grep ceph /etc/hosts|awk '{print $(NF)}'

6、CentOS系统远程主机时默认使用root帐户和22端口,要把用户名的默认值修改成cephu(admin节点root帐户下操作)

cat >/home/cephu/.ssh/config<<EOF

Host ceph-admin

Hostname ceph-admin

User cephu

Host ceph-mon1

Hostname ceph-mon1

User cephu

Host ceph-mon2

Hostname ceph-mon2

User cephu

Host ceph-mon3

Hostname ceph-mon3

User cephu

Host ceph-mgr1

Hostname ceph-mgr1

User cephu

Host ceph-mgr2

Hostname ceph-mgr2

User cephu

Host ceph-store1

Hostname ceph-store1

User cephu

Host ceph-store2

Hostname ceph-store2

User cephu

Host ceph-store3

Hostname ceph-store3

User cephu

EOF说明:

Host ceph-admin 主机对应的是hosts文件里的

Hostname ceph-admin 主机名对应是设置的系统名

User cephu 对应系统里创建的普通用户

Port 52113 有需要也可以端口

远程主机 ssh ceph-mon1 其实就是ssh -p 52113 cephu@ceph-mon1

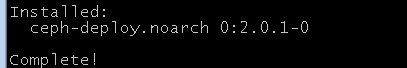

7、添加ceph-deploy源并安装(admin节点的root帐户下操作)

cat > /etc/yum.repos.d/ceph.repo<<EOF

[ceph-noarch]

name=ceph noarch packages

baseurl=https://download.ceph.com/rpm-luminous/el7/noarch

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

EOF

yum install -y ceph-deploy

四、部署ceph集群

1、创建ceph-deploy的工作目录,安装python依赖环境(admin节点的cephu用户下操作)

su - cephu

mkdir my-cluster

wget https://files.pythonhosted.org/packages/5f/ad/1fde06877a8d7d5c9b60eff7de2d452f639916ae1d48f0b8f97bf97e570a/distribute-0.7.3.zip

sudo yum install -y unzip

unzip distribute-0.7.3.zip

cd distribute-0.7.3/

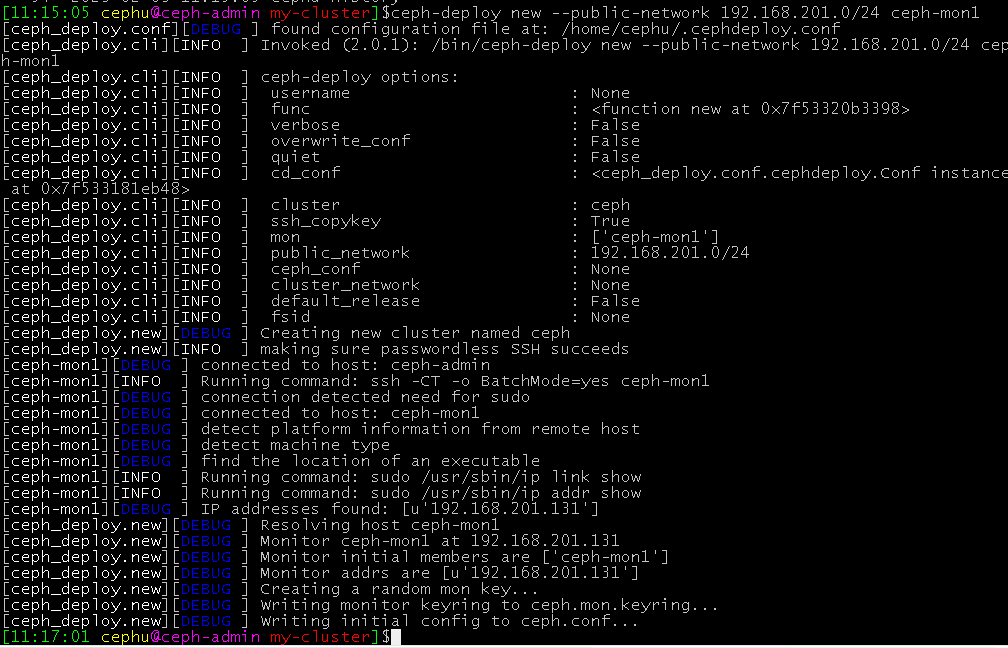

sudo python setup.py install2、创建主monitor(监视器)

cd ../my-cluster/

sudo cp /root/.ssh/config /home/cephu/.ssh/

chmod 600 /home/cephu/.ssh/config

#此处没有指定cluster网络(--cluster-network)

ceph-deploy new --public-network 192.168.201.0/24 ceph-mon1

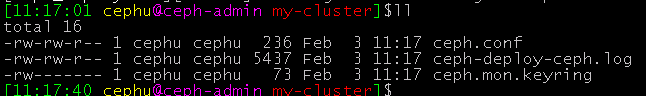

创建成功,目录下会生成3个文件

3、设置集群节点ceph安装源(在store和mon和mgr节点root帐户下操作)

su -cat >/etc/yum.repos.d/ceph.repo<<'EOF'

[ceph]

name=ceph packages for $basearch

baseurl=http://mirrors.aliyun.com/ceph/rpm-luminous/el7/$basearch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

[ceph-noarch]

name=ceph noarch packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-luminous/el7/noarch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

[ceph-source]

name=ceph source packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-luminous/el7/SRPMS

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

EOF

#有阿里的epel源话就不需要安装官网的epel源了

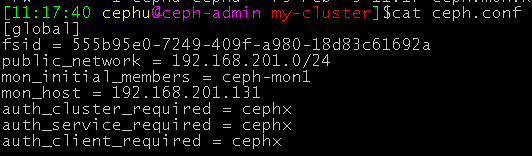

#yum install -y epel-release切换到cephu普通用户安装的是L(luminous)版的ceph(在store和mon和mgr节点操作)

su - cephu

sudo yum install -y ceph ceph-radosgw

ceph --version

4、admin节点上初始化monitor节点(cephu用户下操作)

#su - cephu

cd /home/cephu/my-cluster/

#初始化monitor节点

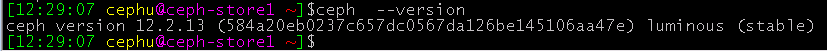

ceph-deploy mon create-initial目录下就会生成很多文件

把配置文件和admin的密钥推送到ceph集群各节点(mgr节点和store节点和mon节点),以免每次执行ceph命令都要明确MON的地址和ceph.client.admin.keyring

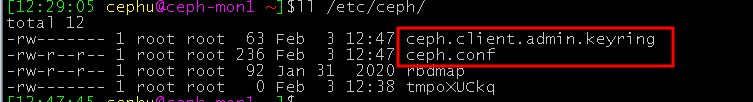

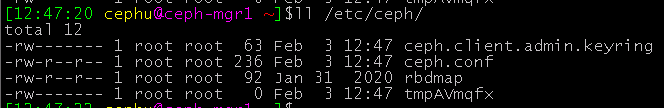

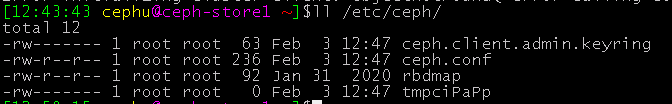

ceph-deploy admin ceph-mon1 ceph-mon2 ceph-mon3 ceph-mgr1 ceph-mgr2 ceph-store1 ceph-store2 ceph-store3 ceph-admin集群节点就有这几个文件

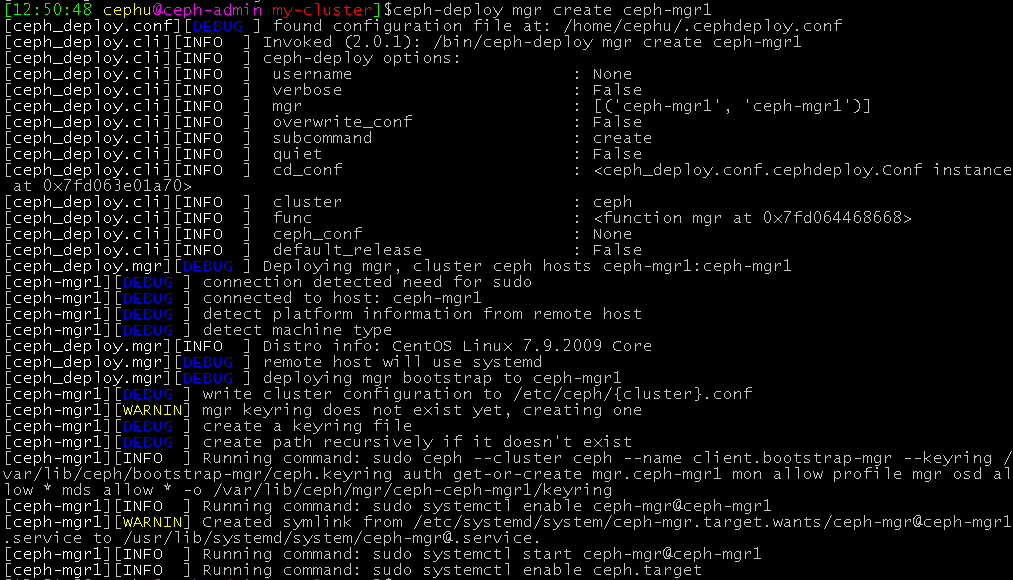

5、配置manager节点,启动ceph-mgr进程(admin节点cephu用户下操作)

ceph-deploy mgr create ceph-mgr1

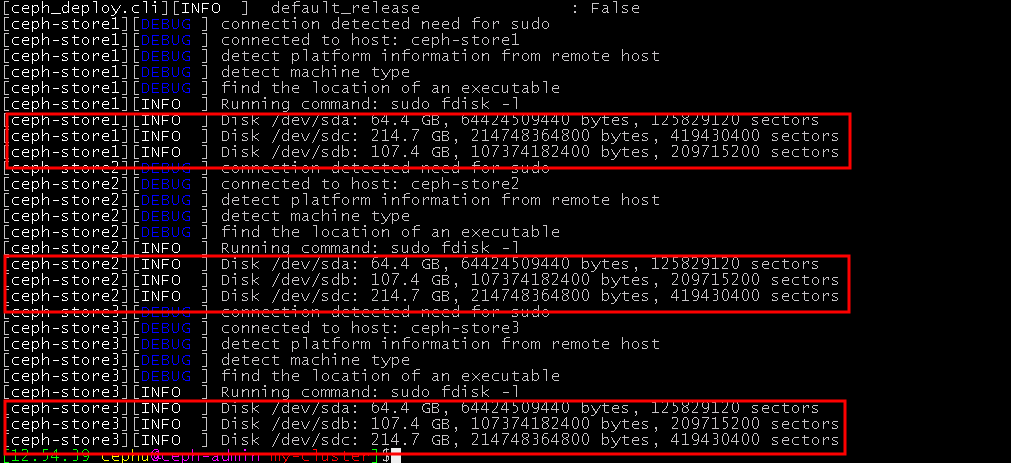

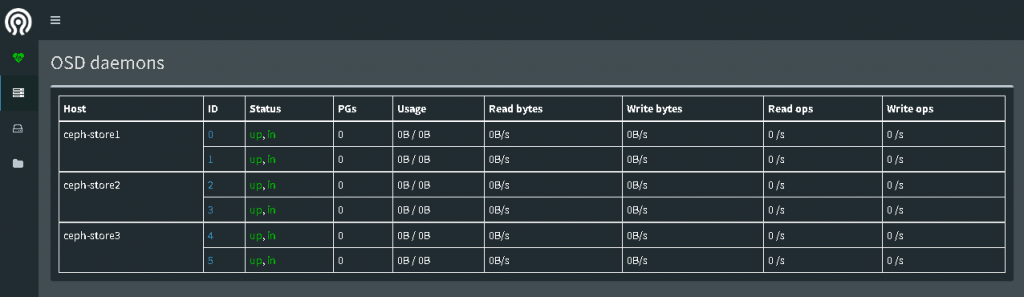

6、查看OSD节点上可用的磁盘信息并添加OSD存储盘(admin节点cephu用户下操作)

ceph-deploy disk list ceph-store1 ceph-store2 ceph-store3

添加(创建)OSD存储盘(后期增加存储盘也是使用此命令)

ceph-deploy osd create --data /dev/sdb ceph-store1

ceph-deploy osd create --data /dev/sdc ceph-store1

ceph-deploy osd create --data /dev/sdb ceph-store2

ceph-deploy osd create --data /dev/sdc ceph-store2

ceph-deploy osd create --data /dev/sdb ceph-store3

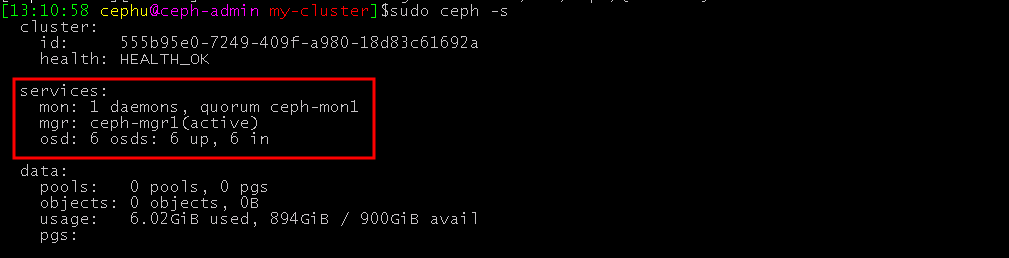

ceph-deploy osd create --data /dev/sdc ceph-store37、查看集群状态(第4步被推过密钥文件的节点都可以查看)

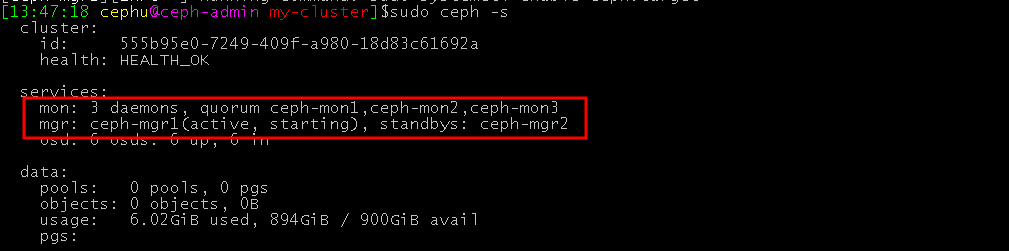

sudo ceph -s

一个mon节点+一个mgr节点+6个OSD存储盘(900G空间,使用了6G,还有894G)

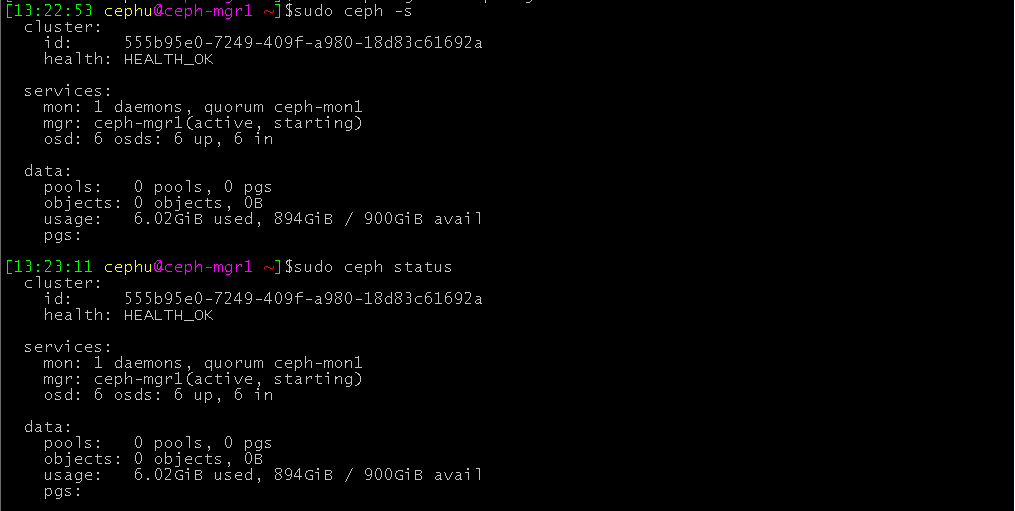

8、在mgr1节点上部署dashboard(ceph-mgr1节点的cephu用户下操作)

su - cephu

#创建管理域密钥

sudo ceph auth get-or-create mgr.ceph-mgr1 mon 'allow profile mgr' osd 'allow *' mds 'allow *'

#开启manager管理域

sudo ceph-mgr -i ceph-mgr1查看mgr节点状态(active,starting)

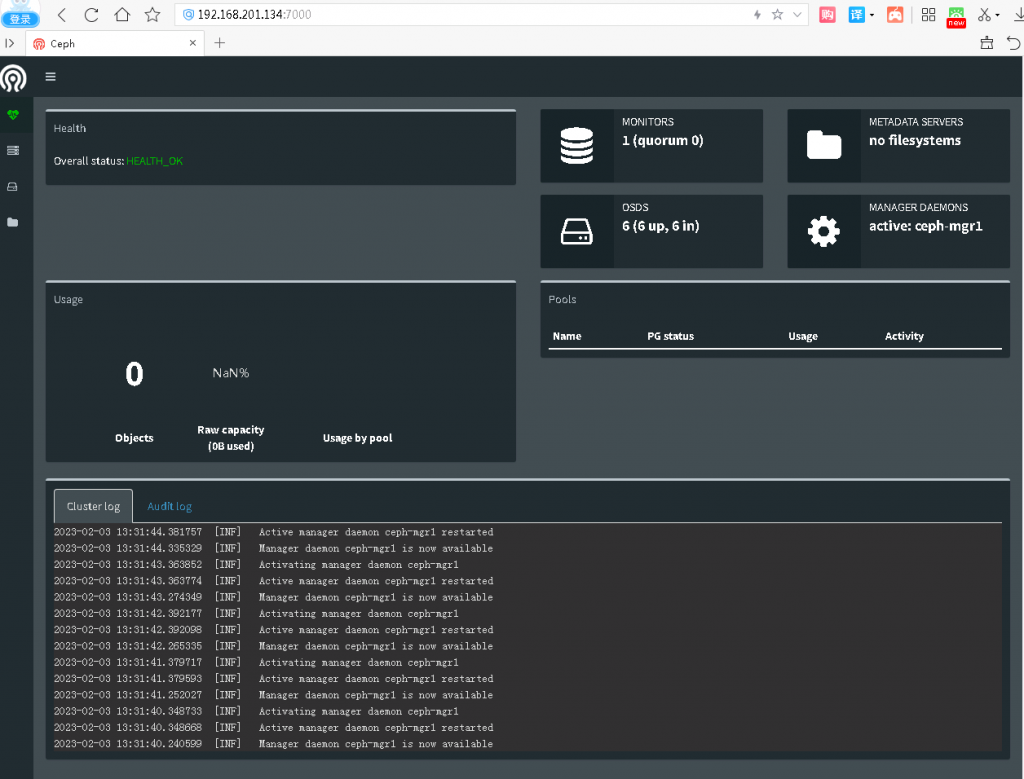

启用dashboard模块并绑定开启模块的主机IP地址

sudo ceph mgr module enable dashboard

sudo ceph config-key set mgr/dashboard/ceph-mgr1/server_addr 192.168.201.134使用浏览器访问dashboard(IP地址:7000)

9、扩展monitor节点和manager节点(admin节点cephu普通用户操作)

从集群状态可以看出monitor节点和manager节点各有一个节点,这是不能实现高可用 的,当一台monitor节点宕机可能使用整个集群崩溃

ceph-deploy mon add ceph-mon2

ceph-deploy mon add ceph-mon3

ceph-deploy mgr create ceph-mgr2查看集群状态

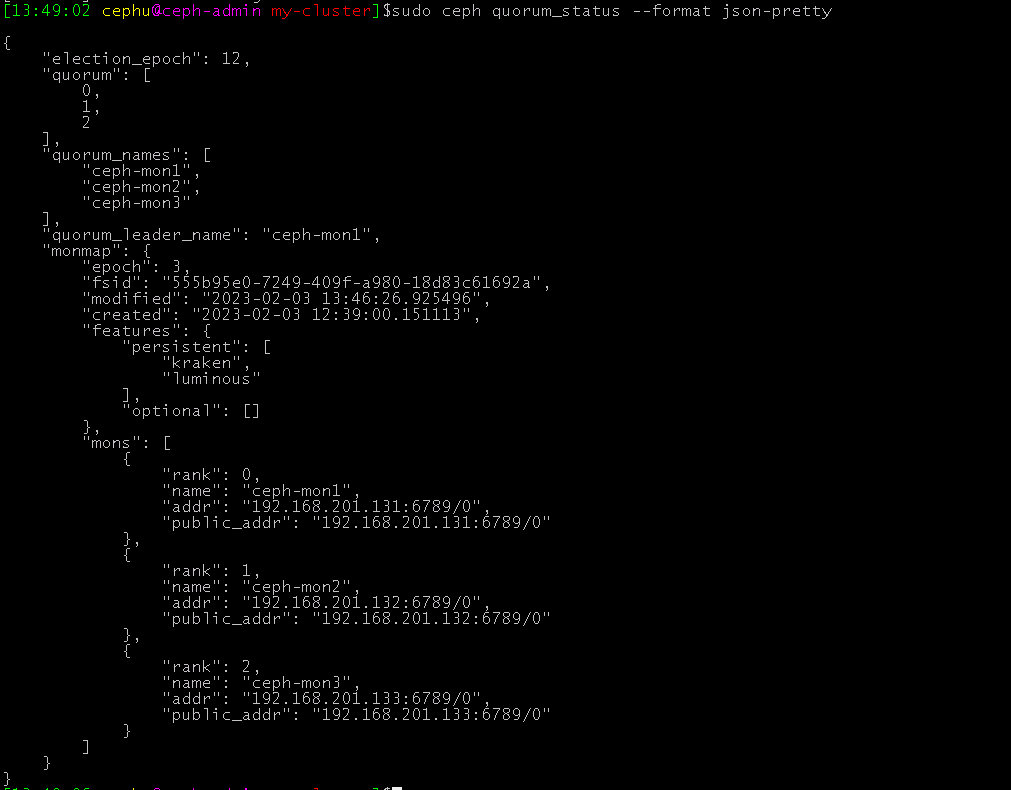

查看监视器及法定人数的相关状态:

sudo ceph quorum_status --format json-pretty

至此集群部署完成

- Pingback: openstack Train版本集群部署(命令集) - 笔记本